Update 03/15/2023 12:30pm ET: In a recent blog post, in response to the below-mentioned Reddit post, Samsung is attempting to clarify how its Galaxy cameras combine super-resolution technologies with AI technology to produce high-quality images of the Moon.

According to Samsung, Galaxy devices have a “Scene Optimizer” feature that uses AI to recognize the Moon as a specific object during the photo-taking process, and applies the feature’s detail enhancement engine to the shot. “When you’re taking a photo of the moon, your Galaxy device’s1 camera system will harness this deep learning-based AI technology, as well as multi-frame processing in order to further enhance details.”

When taking images of the Moon with Scene Optimizer enabled, your Galaxy camera will synthesize more than 10 images taken at 25x zoom or higher. Samsung’s explanation is that these images taken at 25x zoom or higher need to be enhanced for clarity. Because of this, when the Moon has been recognized as an object by the Galaxy Camera, the detail enhancement engine of Scene Optimizer, alongside the Super Resolution technology, will enhance the image for clarity.

Read Samsung’s full explanation here.

The original story is below:

A Redditor is making a bold claim about Samsung’s Galaxy S23 Ultra and its Moon photography.

According to ‘ibreakphotos’ on the Android Reddit page, “Samsung space zoom moon shots are fake.”

“Many of us have witnessed the breathtaking moon photos taken with the latest zoom lenses, starting with the S20 Ultra. Nevertheless, I’ve always had doubts about their authenticity, as they appear almost too perfect. While these images are not necessarily outright fabrications, neither are they entirely genuine. Let me explain,” wrote u/ibreakphotos.

According to the user, Samsung adds detail to Moon shots where there is none, with the AI doing most of the heavy lifting and not the phone’s camera optics.

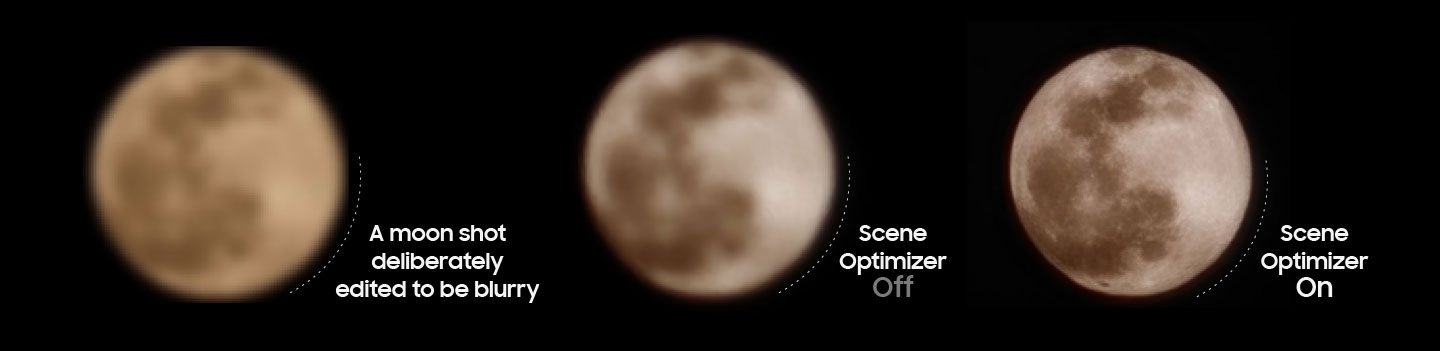

To prove the claims, the Redditor downloaded this high-res image of the Moon from the internet and downsized it to 170 x 170 pixels. The Redditor then blurred the photo so that no detail of the Moon was visible. u/ibreakphotos then put the image on full screen on their monitor, turned off the lights, moved to the other end of the room and zoomed in on the digitally blurred image with their Samsung device.

The image the Redditor clicked with their device was this one. Essentially, they shot the image on the left and got the image on the right as a result.

It’s safe to say that the details captured in the photo couldn’t have been possible because they weren’t clearly visible in the blurred image in the first place, leading me to ask, are all Samsung Ultra device Moon shots essentially fake? Well, the images are not totally fake, but they’re not entirely genuine, either.

It’s safe to say that the details captured in the photo couldn’t have been possible because they weren’t clearly visible in the blurred image in the first place, leading me to ask, are all Samsung Ultra device Moon shots essentially fake? Well, the images are not totally fake, but they’re not entirely genuine, either.

“Samsung is leveraging an AI model to put craters and other details on places which were just a blurry mess,” wrote the Reddit user. “There’s a difference between additional processing a la super-resolution, when multiple frames are combined to recover detail which would otherwise be lost, and this, where you have a specific AI model trained on a set of moon images, in order to recognize the moon and slap on the moon texture on it (when there is no detail to recover in the first place, as in this experiment).”

It’s worth noting that Samsung has always been pretty clear about using AI when taking pictures of the moon. The handset uses a moon recognition engine created from images of various moon shapes ranging from a full moon to a crescent moon. The tech giant utilizes AI and deep learning models to do this.

This is How Samsung S23 Ultra is able to take moon photographs pic.twitter.com/iDGPoPKNw0

— Utsav Techie (@utsavtechie) March 12, 2023

Check out u/ibreakphotos‘ full experiment on Reddit here.

Image credit: u/ibreakphotos

Source: u/ibreakphotos

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.