AI has taken the world by storm over the last year, and AI-generated art has been one of the several areas at the forefront.

The technology, which creates art using prompts provided by users, has also been a source of controversy. As recently as January, AI firm Stability AI was sued by Stock content giant Getty Images, stating that the firm unlawfully copied and processed “millions” of Getty’s images that were protected by copyright without a license. Stability AI’s ‘Stable Diffusion’ was allegedly using material picked up from the internet and from Getty’s catalogue without permission.

Before the legal action taken by Getty, Stability AI was already facing a class action lawsuit in the United States. It was reportedly filed by three artists claiming the AI tool is using their images, and their copyright has been infringed (via Barrons).

To prevent such theft of intellectual property, researchers from the University of Chicago SAND Lab have created a tool called Glaze that aims to prevent artists from having their art styles learned and imitated by AI software, as shared by MakeUseOf.

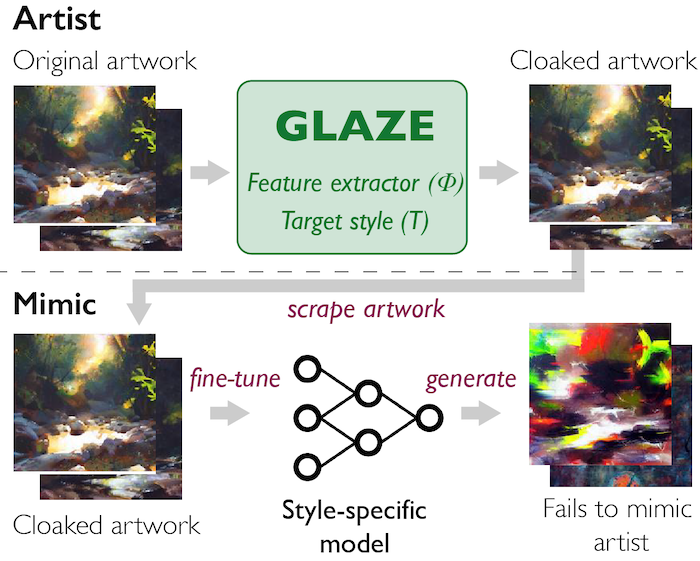

Essentially, the tool adds a “very small change” to an artist’s artwork before it is shared online. The small change, which Glaze calls ‘Cloaking’ is barely visible to the human eye, and the cloaked version of the art looks nearly identical to the original, all while preventing AI models from copying the art or its style. Changes made by Glaze are more visible on art with flat colors and smooth backgrounds. “We refer to these added changes as a “style cloak” and changed artwork as “cloaked artwork,” wrote Glaze.

Further, with Glaze, an AI model trains on cloaked versions of an artist’s art and learns a different style from the artist’s original visual style. When it’s asked to mimic the specific artist’s art, the AI model produces art that is distinctively different from the artist’s style.

Further, with Glaze, an AI model trains on cloaked versions of an artist’s art and learns a different style from the artist’s original visual style. When it’s asked to mimic the specific artist’s art, the AI model produces art that is distinctively different from the artist’s style.

University of Chicago SAND Lab’s research paper on Glaze can be found here. Glaze Beta2 is now available for download.

Image credit: Glaze

MobileSyrup may earn a commission from purchases made via our links, which helps fund the journalism we provide free on our website. These links do not influence our editorial content. Support us here.